Introduction

Initially designed for Natural Language Processing (NLP), transformers have transcended their original domain and demonstrated remarkable capabilities in other fields, particu-larly computer vision and time-series forecasting. This expansion is fuelled by their self-attention mecha-nisms, scalability, and ability to capture long-range dependencies. While convolutional neural networks (CNNs) dominated computer vision and recurrent neural networks (RNNs) were the standard for time-series data, transformers have emerged as competitive and, in some cases, superior alternatives. Many professionals enrolling in a Data Scientist Course are now focusing on transformer models due to their cross-domain applica-tions.

Transformers in Computer Vision (ViTs & More)

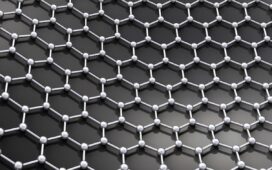

Transformers in computer vision are redefining how machines process images, replacing CNNs in various applications. Vision Transformers (ViTs) and their variations leverage the self-attention mechanism to analyse images in a way that overcomes some of CNN’s limitations.

Vision Transformers (ViT) – The Shift from CNNs

The Vision Transformer (ViT), introduced by Google, treats images as se-quences of patches, similar to how NLP models process word tokens.

Instead of convolutions, it uses multi-head self-attention to capture glob-al dependencies.

Unlike CNNs, which use fixed receptive fields, transformers process entire images holistically, learning relationships between distant parts of the image.

Benefits of Vision Transformers

- Better Global Context Awareness: Unlike CNNs, which rely on local filters, ViTs more efficiently capture long-range dependen-cies.

- Less Inductive Bias: CNNs inherently assume locality and translation invariance, whereas ViTs learn patterns with fewer pre-imposed constraints.

- Improved Perfor-mance on Large Datasets: When trained on vast image datasets, ViTs often outperform CNNs, particularly in object detection and classification.

Computer Vision Applications

- Object Detection: Facebook’s Detection TRansformer (DETR) uses transformers for end-to-end object detection, replacing hand-crafted anchor boxes with learned object queries. It eliminates the need for non-maximum suppres-sion (NMS), simplifying the pipeline.

- Image Classification: ViTs excel in large-scale image classification, matching or surpassing CNNs in benchmarks like ImageNet. Hybrid approaches, such as ConViT (CNN + Transformer), leverage the strengths of both architec-tures.

- Image Segmentation: Segmenter and MaskFormer integrate transformers for improved semantic segmentation, helping in au-tonomous driving and medical imaging.

- Medical Imaging: Transformers process CT scans, MRIs, and X-rays, aiding in tumour detection, anomaly classification, and disease diagnosis. Many professionals specialising in medical AI enrol in a data course to enhance their skills in transformer-based image analysis. In several cities, technical courses are available that are specifi-cally designed for the medical segment. A specialised Data Scien-tist Course in Pune, for instance, could be structured for exclusive coverage on how transformers are used in medical imaging.

- Super-Resolution & Image Generation: Generative models like DALL·E and Stable Diffusion use transformers to gener-ate high-resolution images from textual descriptions.\

Transformers in Time-Series Forecasting

Traditional time-series models relied on RNNs, LSTMs, and ARIMA-based statistical methods. However, transformers, particularly Temporal Fusion Transformers (TFT) and Time-Series Transformers (TST), have revolutionised forecasting by effectively handling long-range dependen-cies.

Why Use Transformers for Time-Series Data?

- Better Handling of Long-Term Dependencies: Unlike RNNs and LSTMs, transformers capture long-term trends without vanish-ing gradient issues.

- Parallel Processing: RNNs process data sequentially, whereas transformers enable parallel computation, drastically improving efficiency.

- Multivariate Forecast-ing: Transformers handle multiple correlated time-series signals, outperforming traditional mod-els.

Time-Series Transformer Models

- Temporal Fusion Transformer (TFT): TFT combines self-attention, gating mechanisms, and recurrent layers, adapting to both long-term trends and short-term fluctuations. It is particularly useful in financial forecasting, demand pre-diction, and healthcare applications.

- Informer & Au-toformer: Informer improves upon standard transformers with probabilistic sparse attention, making it scalable for long-sequence forecasting. Autoformer refines this by employing seasonal-trend decomposi-tion, capturing periodic patterns efficiently.

- PatchTST: Treats time series as patches (similar to ViTs in vision) and effectively applies self-attention to model trends. Used for stock price predictions, climate modelling, and sales forecasting. Students in a Data Scientist Course often explore PatchTST due to its efficiency in handling time-series data.

Time-Series Applications

- Financial Market Pre-dictions: Transformers’ ability to analyse large datasets with complex dependencies benefits stock prices, risk analysis, and portfolio management. Hedge funds and trading firms use transformers to detect market patterns and make real-time trading decisions.

- Healthcare & Patient Monitoring: By analysing continuous patient data, transformers predict disease progression, ICU readmissions, and early-onset conditions. Wearable devices use transformers for heart rate and activity pattern recognition.

- Energy Demand Fore-casting : Transformers help in predicting electricity consumption, renewable energy outputs, and supply-chain demand.

- Anomaly Detection in IoT & Cybersecurity: Transformers detect anomalies in network traffic, fraud detection, and industrial sensor monitoring. Cybersecurity professionals training in a Data Scientist Course learn to leverage transformers for intrusion de-tection.

- Weather & Cli-mate Forecasting: Time-series transformers improve long-range weather predictions, tracking climate pat-terns, temperature changes, and natural disaster forecasting.

Challenges and Future Prospects

While transformers excel in both computer vision and time-series fore-casting, they come with some specific challenges that professionals need to handle in real-world scenari-os.

- High Computational Cost: Training requires extensive hardware (TPUs/GPUs), making them inaccessible for smaller firms.

- Large Data Requirements: Transformers perform best with massive datasets; small-scale applications may strug-gle.

- Interpretability Issues: Unlike CNNs with feature maps, understanding attention weights in transformers remains complex.

Future Directions

Some of the future directions and advancements foreseen in transformer technology and covered in an up-to-date Data Science Course in Pune and such urban learning hubs are listed here.

- Hybrid Architectures: Combining CNNs with transformers for efficient computation.

- Efficient Transformers: Research on sparse attention, memory-efficient models, and distillation techniques is mak-ing transformers viable for low-resource environments.

- Real-Time Processing: Optimising transformer models for edge devices, IoT, and mobile applications.

Conclusion

Transformers have expanded far beyond NLP, revolutionising computer vision and time-series analysis. ViTs are replacing CNNs in image classification, object detection, and medi-cal imaging, while time-series transformers outperform traditional models in finance, healthcare, and en-ergy forecasting. Despite computational challenges, continuous research is making transformers more ef-ficient and accessible, paving the way for further advancements in AI-driven applications. For professionals aiming to specialise in these fields, enrolling in a Data Scientist Course can provide essential knowledge and hands-on experience in transformer-based applica-tions.

Business Name: ExcelR – Data Science, Data Analyst Course Training

Address: 1st Floor, East Court Phoenix Market City, F-02, Clover Park, Viman Nagar, Pune, Maharashtra 411014

Phone Number: 096997 53213

Email Id: enquiry@excelr.com